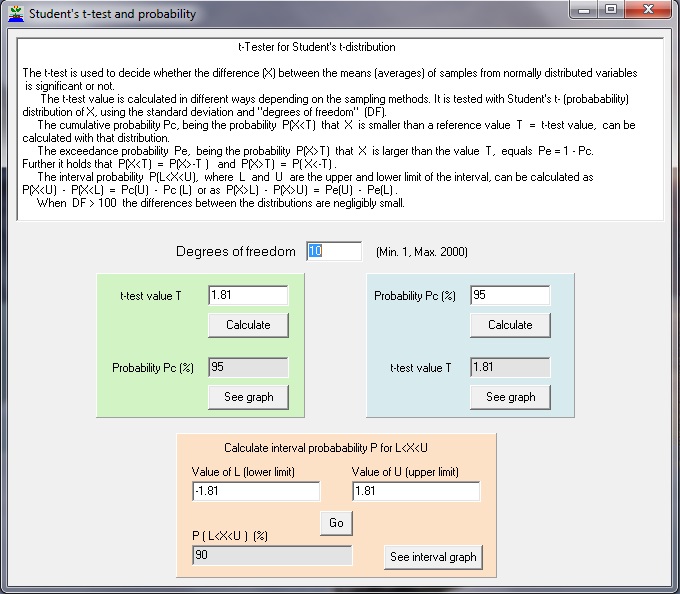

In a power analysis, there are always a pair of hypotheses: a specific null hypothesis and a specific alternative hypothesis. But in this case, the power will not be the same for every pair of proportions with the same difference, for example, the power for p 1 0.2 and p 1 0.3 is not the same as the power for p 1 0.3 and p 1 0.4. This can be done with a t-test for paired samples (dependent samples). Groups - Paired and independent t-tests: The approach of Dupont and Plummer (1990) is. What is h effect size When comparing the effect size of the proportion test, the obvious effect size will be the difference p 1 minus p 2. Here for an example using the 1 Sample t-Test. PS: Power and Sample Size Calculation version 3.1.6, October 2018. SigmaXL > Statistical Tools > Power & Sample Size Chart.

A graph showing the relationship between Power, Sample Size and Difference can then be created using.SigmaXL > Statistical Tools > Power & Sample Size with Worksheet > 2 Sample t-Test. To determine Power & Sample Size using a Worksheet, click.05, and Ha: Not Equal To (two-sided test).Ī power value of 0.97 is good, hence we have the basis for the “minimum sample size n=30” rule of thumb used for continuous data. Sample Size and Difference as shown: Note that we are calculating the power or likelihood of detection given that Mean1 – Mean2 = 1, with sample size for each group = 30, standard deviation = 1, significance level =. Click SigmaXL > Statistical Tools > Power & Sample Size Calculators > 2 Sample t-Test Calculator. A tests power is the probability of correctly rejecting the null hypothesis when it is false a tests power is influenced by the choice of significance level.Click OK.Power & Sample Size for 2 Sample t-Test To determine Power & Sample Size for a 2 Sample t-Test, you can use the Power & Sample Size Calculator or Power & Sample Size with Worksheet.Enter Power & Sample Size: 1 Sample t-Test: Sample Size (N), click X Axis (X1) selectĭifference, click Group Category (X2). Select Power (1 – Beta), click Y Axis (Y) select.To create a graph showing the relationship between Power, Sample Size and Difference, click.Note: By setting Standard Deviation to 1, the Difference values will be a multiple of Standard Deviation.Ĭlick OK. Sample Size (N) and Difference columns as shown.

Ensure that Solve For Power (1 – Beta) is selected.Typically, we would like to see Power > 0.9 or Beta Statistical Tools > Power & Sample Size with Worksheets > 1 Sample t-Test. 2 sample t-test using an approximation of the standard normal. It is the probability of detecting the specified difference.Īlternatively, the associated Beta risk is 1-0.1836 = 0.8164 which is the probability of failure to detect such a difference. The following formula can be used to determine the sample size required for each group in a. Enter the sample standard deviation value of 0.6705 in

Of course it wasn't powerful enough - that's why the result isn't significant. Given any two values of Power, Sample size, and Difference, SigmaXL will solve for the remaining selected third value. You've got the data, did the analysis, and did not achieve 'significance.' So you compute power retrospectively to see if the test was powerful enough or not. The difference to be detected in this case would be the difference between the sample mean and the hypothesized value i.e. Ha: Not Equal To to be consistent with the original test. We will treat the problem as a two sided test with We will only consider the statistics from Customer Type 3 here.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed